In this second part of our SAM Foundation series we look at Compliance Reporting and the importance of understanding your deployment position.In part one of this series we covered the importance of a full data collection across your data sources and contract and licensing information, now we look at how to bring that together into a compliance position. The first realisation is - wow! - that's a lot of data we have out there! So just as we needed tooling to perform the data gathering exercise we are going to need analytics to decipher not only what's important but how to interpret it all, for which there are two aspects:

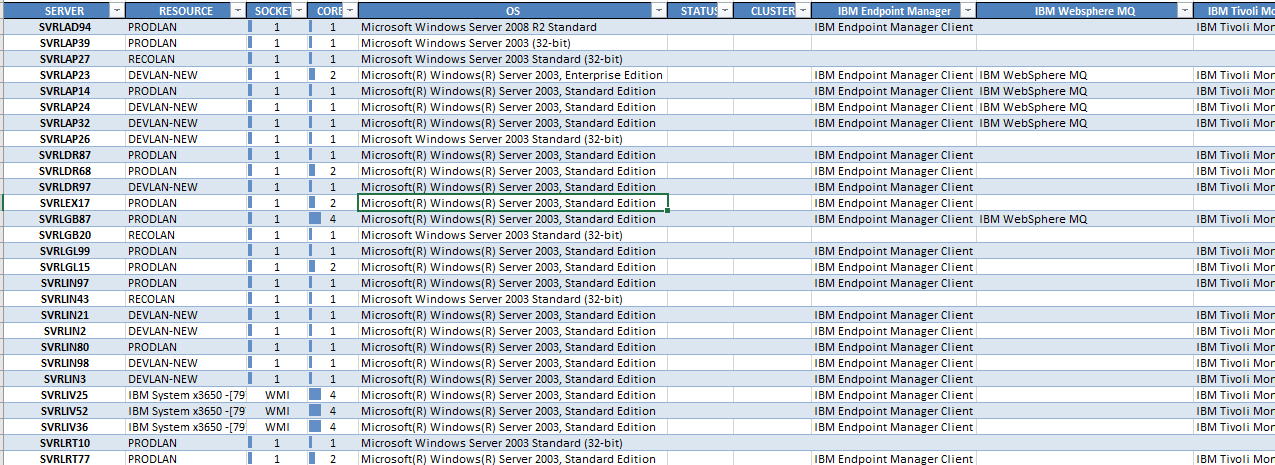

Now what exactly do we mean by 'Scale Reporting'? Basically this means a reporting facility that enables you to stipulate variable parameters from product to vendor to company, with the output organised by device in a concise and easily readable form - for example ComplianceWare's powerful python & pandas based analytics engine that slices and organises the data into output as a familiar Excel workbook. A snapshot of the output as below: The analytics should also consider base licensing metrics such as server core and PVU minimums, apply relevant bundling rules to avoid double counting, and recognise non-chargeable installations such as clients and free-edition software. So we now have our first view of what's deployed where - and that's a good start, but it doesn't mean the jobs done. You'll want to perform some spot / sanity checks across the report, and that's where the 'Direct Examination and Querying' comes in. Here, your tool should allow you to easily interrogate your data collection (which can span many millions of rows) for further review and confirmation, and that's accomplished via smart features that enable you to slice, limit and target the fields and items of interest. Again, with ComplianceWare as an example you can easily navigate through the data by vendor, product, data source, and perform smart searches with inclusion and exclusion parameters to dynamically find exactly what you are after. ok ... we're happy with our deployment report - now what?Now it gets interesting - does what's reported as deployed match what we're actually entitled to? While some products can be automatically tallied (eg. products with simple install or device metrics) others will require more effort such as resource based metrics like cores or logical licenses such as users, and those in more complex environments such as virtual environments where physical v virtual considerations must be taken into account. Here there are no short-cuts - it will require a knowledgeable individual (preferably with prior experience in the environment) to work through each product in a methodical and calculated manner to (a) derive the optimal licensing construct and then (b) reconcile against the recorded (and evidenced) level of licensing. As this progresses it is imperative to capture your findings and ensure they are lodged as an artefact for audit readiness and as a baseline for future reporting cycles (again with ComplianceWare this can be stored as 'Verification' material alongside the updating of actual usage figures). And just how often should the whole exercise be performed? We'd recommend that you cover your major vendors at least annually, and institute a program of work that targets a select number of products or vendors quarterly. The good news is that once you've completed one cycle others become easier as you'll have a baseline to compare or commence from. So to summarise:

0 Comments

|

<

>

Archives

November 2023

|

|

Unravelling license complexity for Business

ACN 623 529 751 |

Privacy Policy | Terms of Use

|